2026.03.25

Improving Diagnostic Accuracy in Brain Imaging

- Jun Nakamura

- Professor, Faculty of Global Management, Chuo University

Areas of Specialization: Cognitive Science, Artificial Intelligence, Intelligent Information, and Technology Management

1. Origins of my research

Visualization of thinking processes is one of my areas of research. The term "thinking," as seen in popular books on certain forms of thinking, tends to broadly refer to all cognitive activity that occurs in the mind. As such, thinking is often discussed as a vague and abstract concept. In contrast, the term "cognition" is somewhat more sharply defined, and it refers to the act of realizing or understanding something as what it would be." My research falls under the latter specific domain of cognition and is situated within the broader fields of artificial intelligence, cognitive science, and intelligent information systems. By visualizing the thought processes of people, I am exploring to identify clues that can help solve problems. To achieve my goals, I conduct experiments in a variety of real-world settings.

My interest in this research field dates back to my time at IBM. While working there, I had the opportunity to observe researchers in the IBM Research Division, where the researchers have explored ideas that could have a significant impact to the society. It was deeply inspired by the way in which researchers generated innovative ideas one after another. It made me aware of ideas to somehow reproduce their constructive thought processes, which might be able to form a basis for innovation. This experience served as the starting point for my research.

The following are the projects which I have tried to undertake:

・ Visualizing moments of one's insight by means of web-based game that support creative activities

・ Visualizing the thought process when the workers perform tasks in their factory

・ Visualizing the key focus points of gantry crane operators when they transfer containers between ships

and trucks at a port

・ Visualizing how a ceramic artist mentally grasps and shapes the unseen underside of a dish or tray while

using a potter's wheel

・ Visualizing the psychological sensations experienced while smoking

In this article, I will introduce a case from a joint research project with Dr. Yoshinobu Ishiwata of the Department of Radiology at Yokohama City University Hospital and the system development company Focus Systems Corporation. Our project focuses on the interpretation of head CT images.

2. Detecting incidental findings in CT imaging

Many of my readers may have undergone a head CT or MRI as part of a health checkup or comprehensive medical screening. CT use radiation and MRI rely on magnetic fields, which are very different approaches in terms of technology. That aside, both methods ultimately serve the same purpose-to visualize the interior of the head in thin cross-sectional slices. Our research project focuses on visualizing how physicians interpret these CT images; specifically, where they focus their attention during diagnosis. It also aims to explore what constitutes a more effective approach to interpreting scan images.

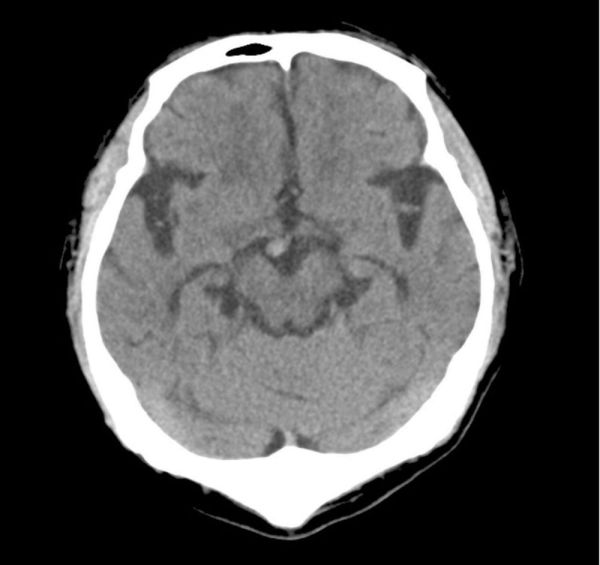

For this project, I collaborated with Dr. Yoshinobu Ishiwata of the Department of Radiology at Yokohama City University Hospital, as I do not have a background in medicine.. I observed CT scan reading sessions and conducted joint analyses based on the data he generously shared with our research team. At first glance, I could not tell at all which areas of the CT scans indicated incidental findings such as tumors or blood clots. In contrast, Dr. Ishiwata was immediately able to identify and point out areas of concern. For reference, I would like my readers to take a look at Figure 1.

Can you tell where the abnormality is in the image?

Figure 1. CT head scan with an incidental finding

Click below to view the answer. The incidental finding is circled in red.

https://bit.ly/3F2HEfq

3. Key points of checks by radiologists

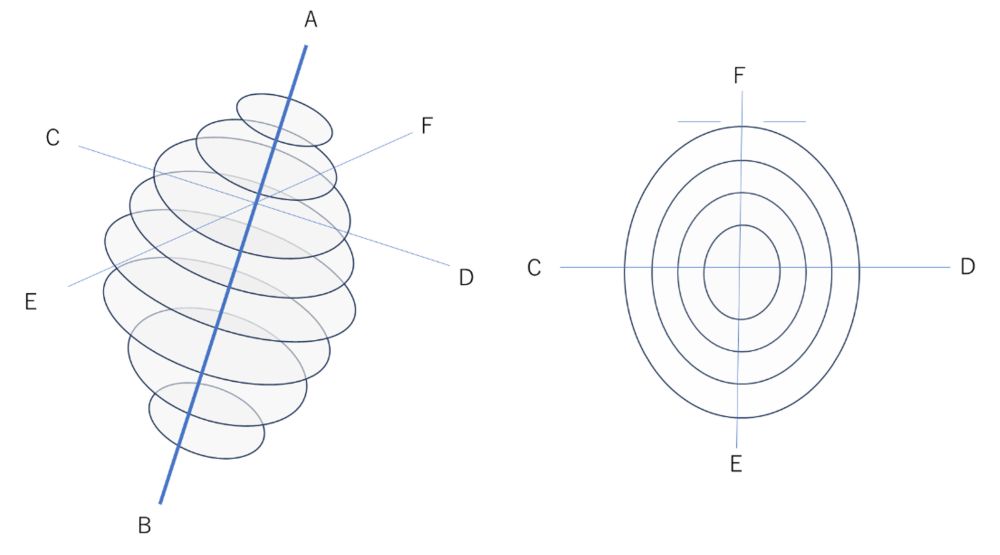

CT image interpretation may be difficult to understand at first. Please allow me to explain using Figure 2. Imagine slicing the head horizontally into eight segments from the top (A) to the bottom (B), as shown in the diagram on the left. When viewed from the top down (from A toward B), the slices appear as shown on the right. While only eight slices are shown in the figure, an actual CT scan typically includes around 50. In this figure, "F" indicates the front of the face with the eyes, nose, and mouth; "E" is the back of the head; and "C" and "D" correspond to the left and right sides of the head, respectively. Although the CT data forms a three-dimensional structure, the images are displayed as two-dimensional slices on a monitor. Physicians scroll through these slices vertically (from point A to point B) while using their spatial awareness to determine whether any incidental findings are present.

Figure 2. Location of slices in CT head scan (left: oblique rear view; right: top of head view)

In our experiment, we worked with two radiologists. One was highly experienced, while the other was relatively inexperienced. Each radiologist interpreted 30 CT cases, with a half of them containing incidental findings and the remaining half without them. The radiologists were not told in advance whether a finding was present. The goal of the experiment was to observe how the two radiologists went about detecting any findings (thus I use the term "incidental findings").

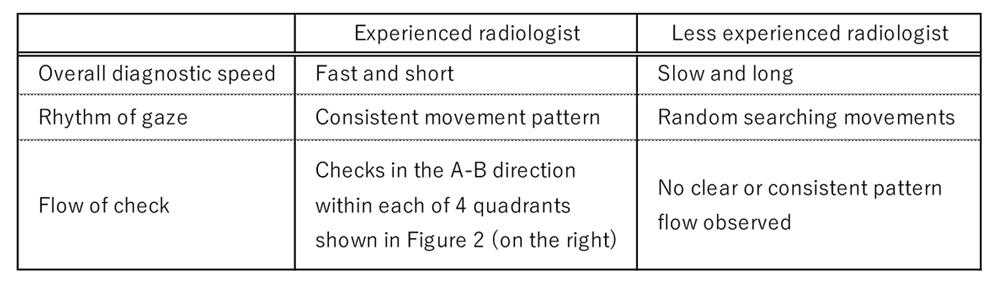

The results clearly showed a difference in diagnostic rhythm between the experienced and less experienced radiologist. A summary of these findings is shown in Table 1.

Table 1. Differences in diagnostic approach between experienced and less experienced radiologists

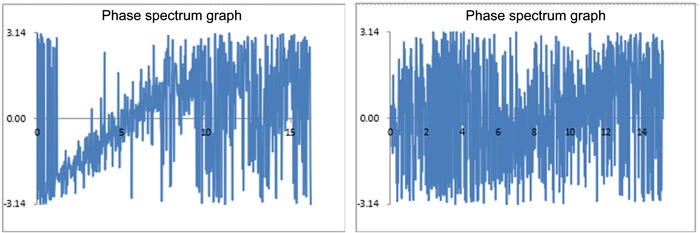

Although experienced radiologists tend to conduct CT examinations more quickly and efficiently overall, our findings revealed that the rhythm of their eye movement changes when they encounter areas that may contain abnormalities. In such cases, they often slow down and carefully examine these areas with a steady rhythm. Figure 3 illustrates this phenomenon. The graph on the left represents the eye movements of the experienced radiologist, while the right shows those of the less experienced radiologist. The difference in rhythmic pattern is clearly visible. These results are based on what is known as a phase spectrum. The phase refers to the position of a wave that fluctuates periodically, and the phase spectrum indicates how far the wave is ahead of or behind a reference point in time. A positive phase value means the wave is ahead of the reference, while a negative value indicates a delay. Figure 3 visualizes this concept by graphing the horizontal eye movements of each radiologist, with amplitude on the Y-axis and time on the X-axis. The resulting waveforms are then transformed into phase spectra to show the phase angle for each frequency component.

Figure 3. Rhythm of eye movement during examination(phase spectrum graph: frequency (X-axis) vs. radians (Y-axis))

In particular, our findings suggest that the efficiency of image interpretation varies depending on whether or not the radiologist follows a consistent diagnostic policy or approach. This insight points to the potential for applying these results to develop more effective training methods for inexperienced radiologists.

4. Coexisting with generative AI

In recent news, the topic of generative AI continues to attract widespread attention. In the corporate world, there is growing interest in using generative AI to streamline operations. In educational settings such as universities, active discussions are taking place on how best to engage with this technology. The medical field is no exception, and we are witnessing the appearance of various medical devices incorporating generative AI. These systems can highlight specified findings with a certain degree of accuracy and offer suggestions for potential abnormalities. Typically, AI systems have high sensitivity but lower specificity. However, it is ultimately the responsibility of the radiologist to assess these potential findings and determine which warrant further attention.

Dr. Ishiwata and I have also discussed the evolving relationship between AI and medicine. A possible scenario is that radiologists will first interpret CT images on their own, then run AI-integrated software, and finally review the images again while referring to suggestions from the AI. In the future, it is expected that radiologists who skillfully do incorporate AI into their workflow will produce possibly more accurate interpretations than radiologists who do not. At present, generative AI lacks the ability to understand human intent. However, if future breakthroughs lead to the development of general-purpose AI with capabilities close to those of humans, artificial perception will become essential. In such a case, data on human perception may need to be integrated into AI systems. For the time being, we should not treat AI-generated results as definitive. Similar to prompt engineering that will become increasingly important, the focus on AI in clinical settings is also shifting. The key question is no longer simply whether AI should be used, but how we can coexist with generative AI and use it to its full potential in a responsible and effective manner.

The findings described above have been presented as follows.

Nakamura, J., Nagayoshi, S., & Ishiwata, Y. (2025). A Study of Gaze Rhythm During the Interpretation of Computed Tomography Images of the Human Head. Journal of Electrical Electronics Engineering, 4(2), 1-3.

Naito, Y., & Nakamura, J. (2025). Quantitative Evaluation of Two-dimensional Gaze during Image Interpretation by Experienced and Inexperienced Physicians in the Medical Industry. In Proceedings of the International Conference on Business, Economics & Information Technology (ICBEIT2025) held in Mactan Cebu, Philippine on 22-23 March, 2025.

Naito, Y., & Nakamura, J. (2024). Characteristics of the Reciprocal Movement of Radiographers' Gaze Based on a Comparison of Entry-Level and Experienced Radiographers. Journal of Electrical Electronics Engineering, 3(5), 1-4.

Sugimoto, T., Nakamura, J., & Ishiwata, Y. (2024). Visualization of the Skilled Physician's Gaze Characteristic during Diagnosis. In Proceedings of the 2024 International Conference on Artificial Life and Robotics (ICAROB2024).

Naito, Y., Nakamura, J., & Ishiwata, Y. (2024). Comparative Analysis of Eye Tracking between Veteran and Novice during Radiological Interpretation. In Proceedings of the 2024 International Conference on Artificial Life and Robotics (ICAROB2024).

Kaneo, Y., & Nakamura, J. (2024). A Study on Skill Transfer in the Medical Field -From the Scene of Inspection of Head CT scans. In Proceedings of the 2024 International Conference on Business, Economics, & Information Technology (ICBEIT2024).

Sugimoto, T., Naito, Y., Nakamura, J., & Ishiwata, Y. (2023). Cognitive approach to human resource development - empirical research in medical industry -. In Proceedings of the 19th International Conference on Knowledge-Based Economy and Global Management.

Naito, Y., Sugimoto, T., Nakamura, J., & Ishiwata, Y. (2023). Skill transfer in the medical industry - a case analysis of CT scans -. In Proceedings of the 19th International Conference on Knowledge-Based Economy and Global Management.

Jun Nakamura/Professor, Faculty of Global Management, Chuo University

Areas of Specialization: Cognitive Science, Artificial Intelligence, Intelligent Information, and Technology Management

Jun Nakamura was born in Tokyo in 1963. He graduated from the Faculty of Economics, Keio University in 1986. In 2007, he completed the Master’s Program in the Systems Management of the Graduate School of Business Sciences, the University of Tsukuba with a master in management. In 2011, he completed the Doctoral Program in the Department of Technology Management for Innovation of the Graduate School of Engineering, the University of Tokyo with a Ph.D. in engineering.

In the business sector, he worked at Itochu Corporation, PricewaterhouseCoopers Co., Ltd., IBM Business Consulting Services Co., Ltd., Volvo Group, and the Persol Group. In academia, he served as a Visiting Professor at Kanazawa Institute of Technology and as Professor at Shibaura Institute of Technology. He has held his current position since 2019.

His current research theme is support for creative activities.

His major written works include Building the Gap Between AI, Cognitive Science, and Narratology With Narrative Generation (co-authored; published by IGI Global), and more.